AWS Big Data Blog

Using Attunity CloudBeam at UMUC to Replicate Data to Amazon RDS and Amazon Redshift

Matt Yanchyshyn is a Principal Solutions Architect at AWS.

Brad Helicher, Director of Cloud Business at Attunity, also contributed to this post. Attunity is an APN Big Data Competency Partner.

Introduction

University of Maryland University College’s mission is to provide a quality education at an affordable cost to busy professionals, mainly adults who are juggling work and families and, often times, military service.

UMUC offers more than 95 online degree and certificate programs in today’s most in-demand fields—including data analytics and cybersecurity—and is the largest institution in the University System of Maryland, as well as the largest public, online university in the country.  The university is a leading provider of educational services to the military since 1949 and offers classes on military installations in more than 25 countries around the world.

The university is a leading provider of educational services to the military since 1949 and offers classes on military installations in more than 25 countries around the world.

Providers of higher education like UMUC face increasing pressure to attract the best students and supply a quality education at the right price. To stay ahead of the curve, colleges and universities must ultimately seek a competitive advantage, constantly locating opportunities for improvement. To achieve this, UMUC launched a big data strategy on the strengths of Amazon Web Services.

“To improve student outcomes and attract new students, our team set out to combine disparate data sources from throughout the University and leverages analytics to extract patterns and identify actionable opportunities.”

~Darren Catalano, VP of Analytics at UMUC

UMUC’s Analytics Initiative

UMUC wanted to organize and derive insights from a combination of the following four core datasets, totaling 10 terabytes:

- Learning Management System: Data related to online student activities including attendance, academic progress, participation and tool usage.

- Student Information System: Data related to student enrollments, course schedules, degrees awarded, faculty information and many other important functions.

- Financials: General Ledger and Student Financials data related to revenues, expenses and student payments.

- Customer Relationship Management (CRM): Data related to prospective students, applicants and service center inquiries.

The source data exists largely in a structured format and is currently stored in a mix of on-premises Oracle and SQL Server databases, Salesforce, and other cloud-based service applications. UMUC’s challenge is to aggregate these disparate data sources, normalize the data, and then load it into a data warehouse so they can analyze the data. This is a classic ‘data integration challenge’:

- Extract data from source systems

- Stage the data in a relational database and apply transformations

- Load data into a data warehouse

- Run analytics and provide a visualization layer

As a first step, UMUC selected Amazon Redshift for its data warehouse, citing its high performance and low cost. To address the data integration challenge, UMUC turned to the AWS Marketplace and chose Attunity CloudBeam for Amazon Redshift.

Attunity CloudBeam

Attunity CloudBeam for Amazon Redshift is a solution that automates and accelerates data loading and incremental changes from heterogeneous data sources to Amazon Redshift. You can use it to load data from a mix of both on-premises and cloud-based data sources, such as data stored in Oracle DB and Microsoft SQL Server in your data center combined with Amazon RDS for Oracle in the cloud. It supports ongoing change data capture (CDC) for incremental updates following the initial load and uses WAN acceleration and automatic retries to speed up the time it takes to move the data and make the process more reliable. In other words, CloudBeam takes care of the heavy lifting of aggregating and moving your data from point A to point B so you can focus on analytics.

Attunity CloudBeam uses a proprietary transfer protocol that supports multi-part transfers, concurrent sessions, streams, SSL encryption and block-level recovery. By using the tool you can achieve 10-12X performance gains over standard copy while still ensuring reliable delivery and security. Attunity provides a table benchmarking large datasets over different connections.

CloudBeam runs on its own Amazon Elastic Compute Cloude (Amazon EC2) instances, separate from the databases servers or instances, and communicates with Attunity Replicate agents running on Windows Server hosts wherever the data sources are located. No software is installed on the database servers themselves since Replicate communicates directly with the databases via their native interfaces, limiting the impact on performance during replication operations. In the case of SQL Server, Attunity Replicate accesses the Transaction Logs (TLOGS) to capture data changes. For Oracle DB, Attunity Replicate uses either Logminer or BFILE to capture changes from the Redo and Archive logs.

UMUC’s CloudBeam Use Case

In the past UMUC was using Oracle Streams to collect data from Oracle Databases and load it into an ODS (Operational Data Store) for transformation using Oracle Data Integrator (ODI), before ultimately loading into a data warehouse for analysis. However, some of the data sources are located on Microsoft SQL Server databases and Oracle Streams is not able to load from non-Oracle databases. To work around this challenge, the team at UMUC had to manually extract data from their SQL Server databases and combine it with their Oracle source data–a time-consuming process.

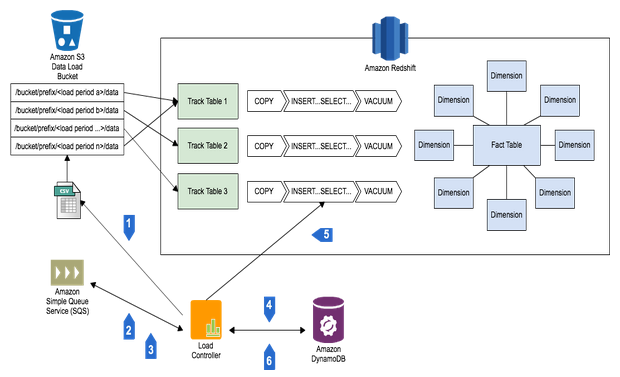

UMUC leveraged CloudBeam to both speed up their data movement and have a single point of management for all data movement. This gives the University greater visibility and flexibility when they choose to add or remove data sources or targets in the future. The engineers at UMUC also found that Attunity was far easier to setup and debug than their old Oracle Streams-based workflow. Specifically, UMUC uses CloudBeam to move the data from hosted Oracle DB and Microsoft SQL Server into an Operational Data Store (ODS) located in an Amazon RDS Oracle Database instance. ETL Transformations are then applied and data loaded into Amazon Redshift where it can be accessed by various business intelligence tools for analysis. UMUC also uses Attunity to occasionally move some of the previously loaded data in Amazon Redshift back into Amazon RDS to be referenced by stored procedures in support of Institutional Research.

Sourcing data from these core datasets, UMUC can now make sense of patterns, including:

- Understanding enrollment trends and demand for academic programs

- Measuring student and faculty engagement in online course

- Analyzing patterns of re-enrollment and course taking behaviors

- Identifying ‘at risk’ students that that need assistance

- Analyzing the effectiveness of marketing campaigns

Beyond just saving time, the engineers at UMUC realized that Attunity CloudBeam could be used to quickly validate the data replication processes. In their case, student data could be stored in a variety of systems such as Oracle Peoplesoft, Amazon RDS and Microsoft SQL Server. Attunity is able to extract and combine data from all source systems and load into a common database stored in Amazon Redshift. From there it’s easy to execute simple SQL queries to isolate any differences in the data stored across multiple tables originating from different data sources.

UMUC used the AWS Marketplace to purchase Attunity CloudBeam and had it configured in a matter of hours, replicating data shortly thereafter. Even with the complex data flow, UMUC’s centralized management of Attunity tasks runs on just a single Amazon EC2 machine, achieving near real-time replication across the mesh of sources & targets.

Attunity provides a detailed walk-through of installing Attunity CloudBeam and Attunity Replicate, including how to configure replication for both on-premises and Amazon RDS-based Oracle DB and Microsoft SQL Server data sources

Conclusion

Calling upon the strengths of Amazon Redshift, Amazon RDS, the AWS Marketplace, and Attunity CloudBeam, UMUC successfully implemented an end-to-end data analysis platform. UMUC achieved operational cost savings and they’re now able to identify actionable opportunities to increase enrollment and provide the right education to the right students.

If you have questions or suggestions, please leave a comment below.

————————————–

Related:

Best Practices for Micro-batch Loading on Amazon Redshift

Using Amazon Redshift to Analyze your ELB Traffic Logs